Machine Learning Makes Complexity Computable

PLAN D develops and trains AI models at production level. The result: robust AI systems that hold up in everyday operations.

From Correlation to Prediction

Machine learning, also known as statistical learning, is based on statistics and probability. Instead of manually programming rules, AI algorithms learn from historical data. They identify correlations, weight influencing factors, and calculate reliable forecasts from them.

The more data available, the more precise the model. Training, validation, and continuous adjustment ensure that raw correlations develop into dependable predictions.

Data science provides the analytical foundation. Deep learning identifies complex relationships. Computer Vision lets systems analyze images and videos; NLP enables them to process language and text.

Machine Learning in Practice

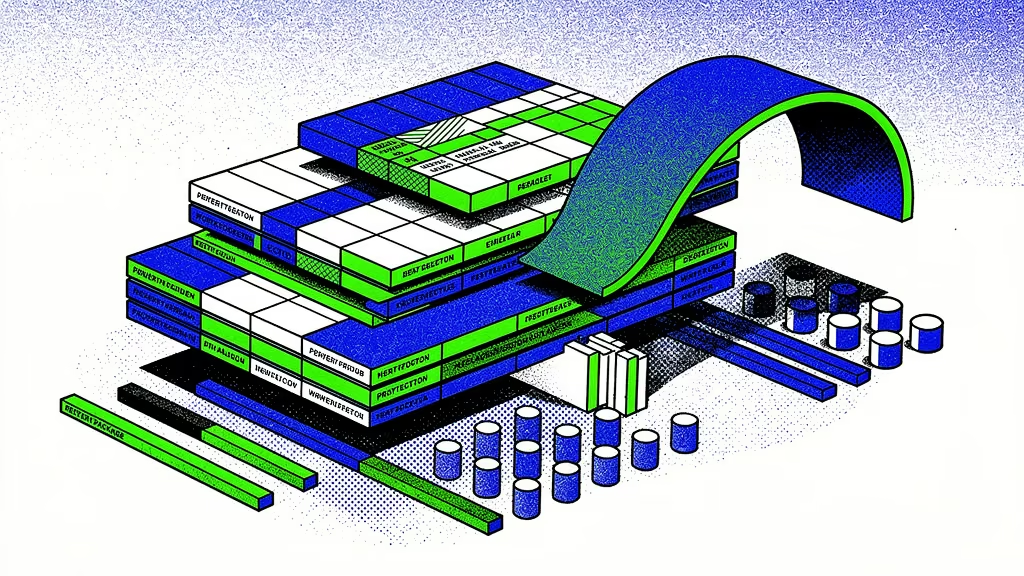

From Dataset to Production-Ready AI Model

1. Exploratory Data Analysis

We start with an in-depth analysis of your data. We examine data quality, distributions, and relationships, and reflect our findings with internal experts. This makes clear which data points will actually drive the AI model.

2. Data Engineering

We clean faulty values, close gaps, and harmonize data formats. External data sources are added selectively when they demonstrably improve model quality.

3. Feature Engineering

We identify the relevant attributes in your data from which the AI model actually learns during training. The goal is maximum predictive power at minimum complexity.

4. AI Approach

We evaluate multiple mathematical approaches and AI model types suited to your challenge. The model with the best results wins out.

5. Model Training & Validation

We train the AI model on your data and measure its quality against clearly defined metrics. Based on these results, we improve the model in a targeted, iterative way.

6. Proof of Concept

A proof of concept makes the AI model usable early on. Your team tests it in everyday operations, provides feedback, and we incorporate those insights directly into the next iteration.

7. Deployment & MLOps

We integrate the AI model into your infrastructure for production use. Monitoring and automated updates ensure the solution remains stable and up to date over time.

AI Compliance

IT Security, GDPR, and EU AI Act — Covered

We develop, operate, and support AI in Germany in accordance with ISO 27001. Encryption, anonymization, clear architecture, and auditable documentation ensure that data protection, IT security, and regulatory requirements are met.

Cloud or On-Premises?

Your Choice.

AWS

Azure

OnPrem

From Pilot to Production

Our project formats bring machine learning into implementation quickly, with calculable effort and clear milestones.

Questions & Answers

Classical software follows fixed rules defined by developers. Every decision is based on clearly programmed if-then logic.

Machine learning works differently. An AI model is not written with fixed rules but trained on data. It recognizes patterns in historical information and learns from them to make predictions or assessments independently.

While classical software executes exactly what was programmed, a machine learning system develops its behavior autonomously from the data it was trained on.

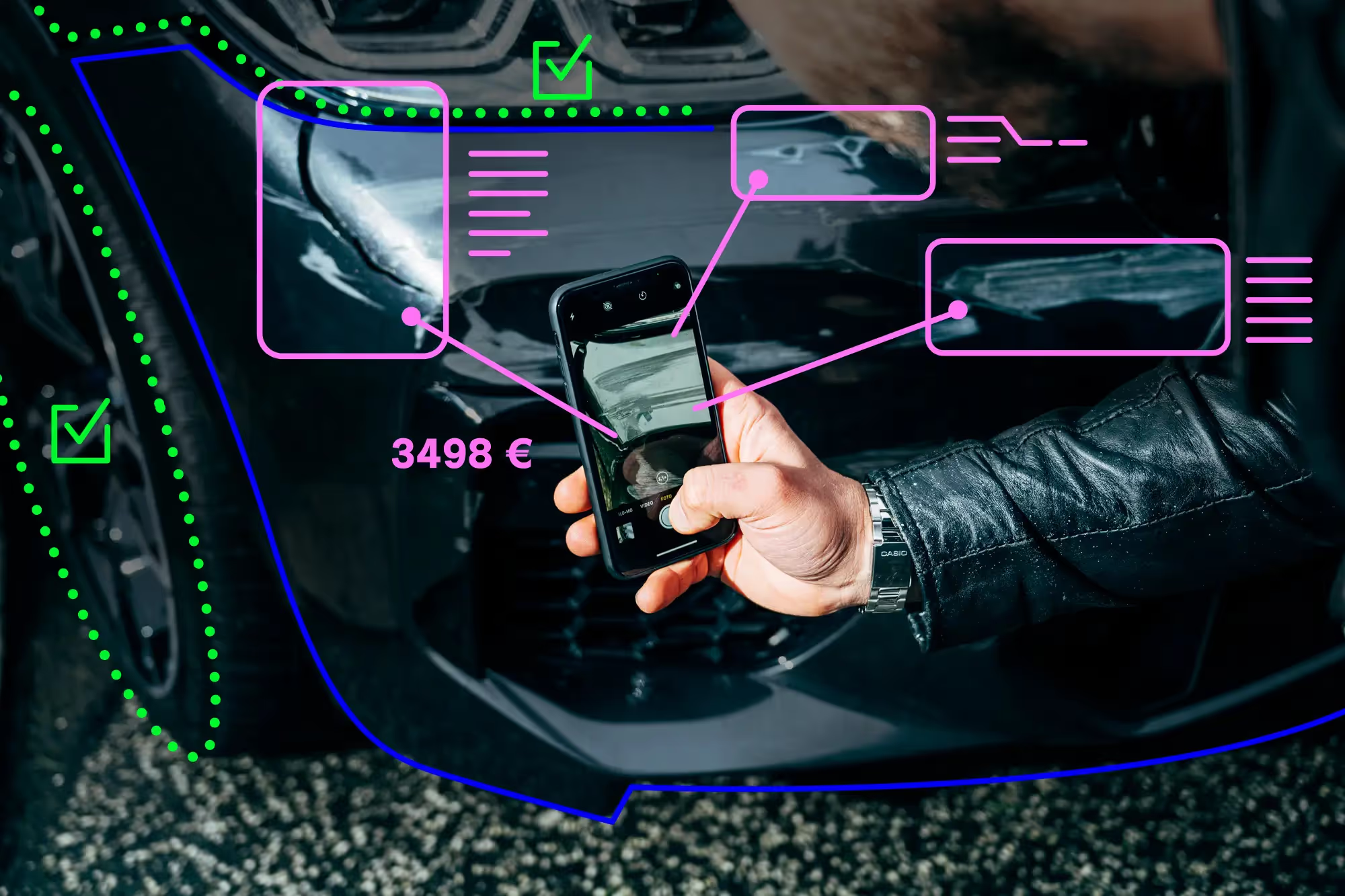

The required amount of data depends heavily on the use case. A simple AI model for clearly structured processes can be meaningfully trained with a few thousand records. Complex tasks, such as those in deep learning or computer vision, require significantly larger data volumes.

What matters most is not quantity but quality. Clean, consistent, and representative data is more important than sheer volume. In many projects, it is possible to start with existing business data, as long as it is structured and contains the information the AI model needs to learn from.

Machine learning pays off especially for processes with high repetition rates and clear structure. When many similar decisions need to be made and large amounts of data are generated, an AI model can handle these tasks faster, more consistently, and at scale.

Machine learning becomes particularly cost-effective when a process has high value creation and automation can reduce time, costs, or errors. The higher the volume and the clearer the problem, the greater the impact.

The costs of AI development depend on the data situation, the complexity of the task, and the integration effort. Typical components include data analysis, development and training of the AI model, testing, and technical integration.

For getting started, we offer clearly scoped project formats at a fixed price. This keeps effort plannable and transparent. Ongoing costs then arise primarily from infrastructure, operations, and the regular updating of the model.

Ongoing costs depend on where and how the AI model is operated. Whether on-premises in your own infrastructure or in the cloud, the sensitivity of the data and the security requirements influence the effort, as do data volume, computing power, and the required scalability.

Costs to plan for include infrastructure, monitoring, support, and continuous model development. We offer all of these services within our AI Tech Team at predictable rates. In our AI projects, costs remain transparent and proportionate to the value created.

The time until a machine learning project generates a return depends heavily on the specific use case. For individually developed AI models trained on your business data, a project can pay off within three months when the use case is well chosen, involves recurring processes, and has high value creation and technical feasibility.

On average, the investment breaks even in about eighteen months. What matters most is process volume, savings potential, and the concrete value the AI model delivers in everyday operations.

First prototypes can emerge within days to weeks depending on the data situation. This makes it possible to see early on whether the chosen approach holds up technically and is viable in practice.

A first productive iteration of an AI model is often realistic within about three months. Larger, higher-value AI systems with significant technical and domain complexity, involving multiple AI models, can be developed and systematically expanded over several years.

An AI model is typically connected to existing systems through clearly defined interfaces. This can happen via API, as a background process, or directly within existing applications, without fundamentally changing the existing system architecture.

If API integration is not practical or feasible, we develop custom user interfaces or standalone applications to make the AI usable. This ensures the AI model is not only technically integrated but genuinely applicable in everyday operations.

Data quality is not assessed in absolute terms but always in relation to a specific AI use case. What matters is whether the data is complete, correct, and representative for exactly the task the AI model is meant to solve.

Typical quality issues include incomplete data, biased or incorrect values, uneven distributions, unsuitable data volumes, ambiguous content, and non-standardized formats. Data privacy and security also play a role, especially for sensitive information.

Good data quality therefore does not mean perfect data. It means data that is structured, reliable, and functionally appropriate for the use case at hand.

The quality of an AI model is assessed using clearly defined performance metrics. They show transparently how reliably a machine learning model performs its task: measurable, comparable, and verifiable over time.

Which metrics are appropriate depends on the specific use case. For forecasting models, the key question is how close the prediction is to the actual outcome. Typical metrics include:

- R² (coefficient of determination): Shows how well the model explains the variance in the target values.

- MAE (Mean Absolute Error): Measures the average absolute deviation between prediction and reality.

- RMSE (Root Mean Squared Error): Weights larger errors more heavily and shows average deviation in the unit of the target variable.

- MAPE (Mean Absolute Percentage Error): Expresses the average deviation as a percentage.

For classification models, the evaluation focuses on how often the model makes correct decisions and which types of errors occur. Common metrics include Precision (accuracy of positive predictions), Recall (hit rate), and the F1-Score (harmonic mean of Precision and Recall).

Beyond that, generalization ability is critical: does the model perform well only on training data or also on new, unseen data? It is also assessed whether data structures change over time, causing the model to lose predictive power through data drift or prediction drift.

In addition to statistical quality, economic impact matters. A model is successful when it measurably improves processes, saves time, or reduces errors. Only the combination of technical accuracy, stability, and economic value shows whether an AI model delivers in production.

How often an AI model needs to be retrained depends on the specific use case. It does not need to be retrained regularly just because time has passed. What matters is whether what the AI is predicting has changed.

A simple example: an AI for recognizing handwritten digits can work reliably for years. The digits 0 through 9 do not change, and people continue to write them in similar ways. An AI model does not forget. It stays as good as it was at training time.

It is different for topics like prices or demand. When prices change significantly or customers buy differently than before, the old examples no longer reflect the current situation. Then the model needs to be trained on new examples so it can make accurate predictions again.

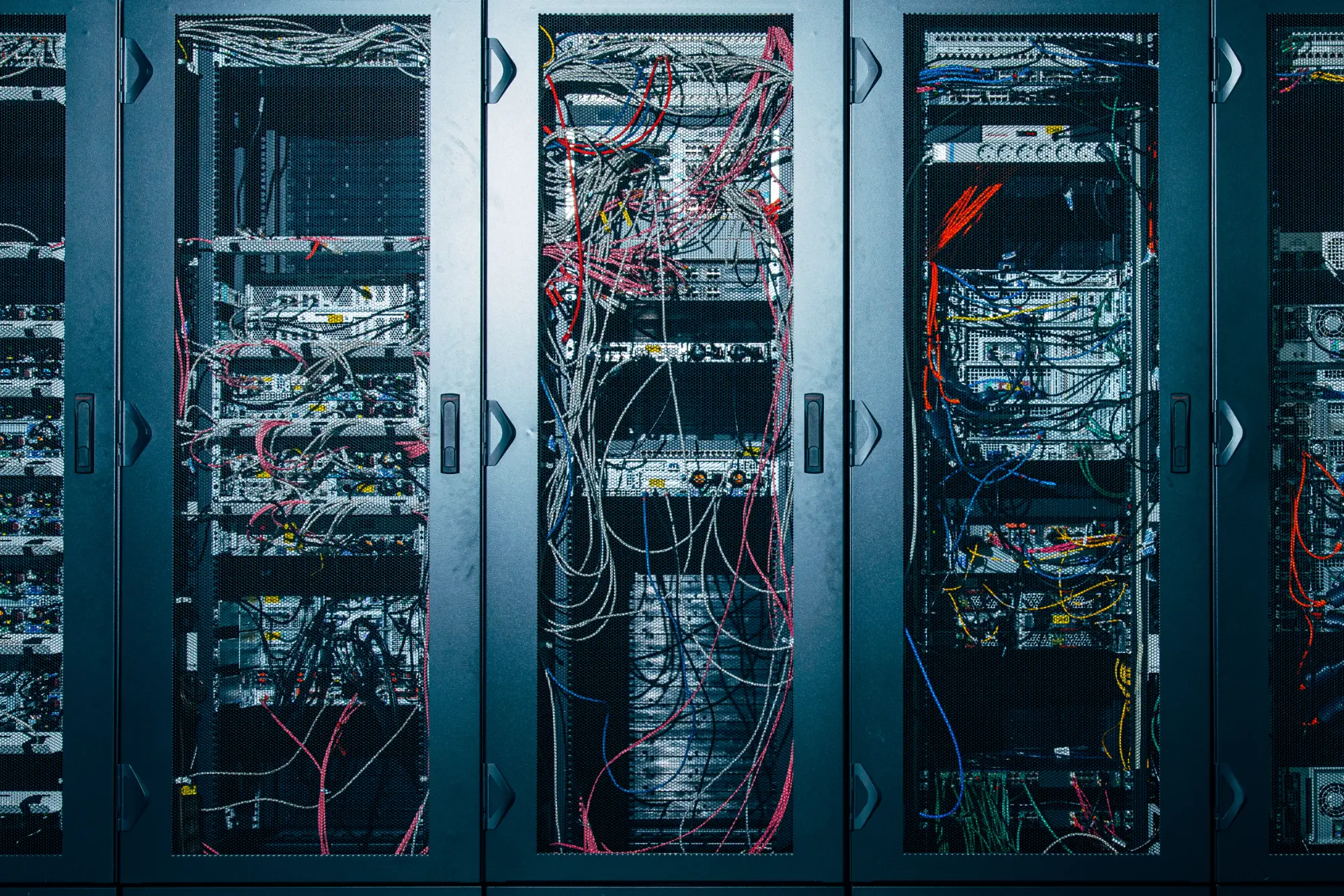

Yes, an AI model can be operated entirely on-premises, meaning within your own infrastructure and without the cloud. We advise on architecture and support installation, integration, and operations.

However, on-premises typically involves higher organizational and technical effort. Infrastructure, security, monitoring, updates, and scaling must be managed internally. This requires the appropriate IT resources.

Cloud providers, by contrast, offer managed services that simplify setup, operations, and maintenance considerably. Features like automatic scaling, monitoring, backups, and high availability are already built in.

The decision between on-premises and cloud therefore depends primarily on security requirements, available IT resources, and the desired operating model.

The AI model developed in the project belongs to you. You receive the complete source code and an unrestricted right of use. This means you can operate the system indefinitely, share it internally, and build on it.

The training data used in the project as well as all results generated by the AI model are also fully in your possession. You retain control and intellectual property over the solution, the code, and the results at all times.

PLAN D develops custom AI models based on your business data with a clear focus on forecast quality and production-ready deployment. Every model is built to be technically sound and ready for integration.

Since 2017, PLAN D has delivered AI projects across a wide range of industries under demanding requirements for integration, security, scalability, and precision. This experience flows into every new engagement. A seasoned team of data scientists, ML engineers, and developers ensures that mathematical modeling, software development, and infrastructure align.

Model quality is assessed using clear metrics and documented in a traceable way. This makes machine learning plannable, measurable, economically effective, and continuously improvable. Our clients receive a reliable AI model with complete code, clear integration capabilities, and full control over results and intellectual property.

Ready when you are

Zukunft beginnt, wenn menschliche Intelligenz künstliche Intelligenz entwickelt. Der erste Schritt ist nur ein Klick.

Since 2017, we have been building AI systems that transform businesses. Let's talk about yours.