Machine Learning Makes Complexity Computable

PLAN D develops and trains AI models at production level. The result: robust AI systems that hold up in everyday operations.

From Correlation to Prediction

Machine learning, also known as statistical learning, is based on statistics and probability. Instead of manually programming rules, AI algorithms learn from historical data. They identify correlations, weight influencing factors, and calculate reliable forecasts from them.

The more data available, the more precise the model. Training, validation, and continuous adjustment ensure that raw correlations develop into dependable predictions.

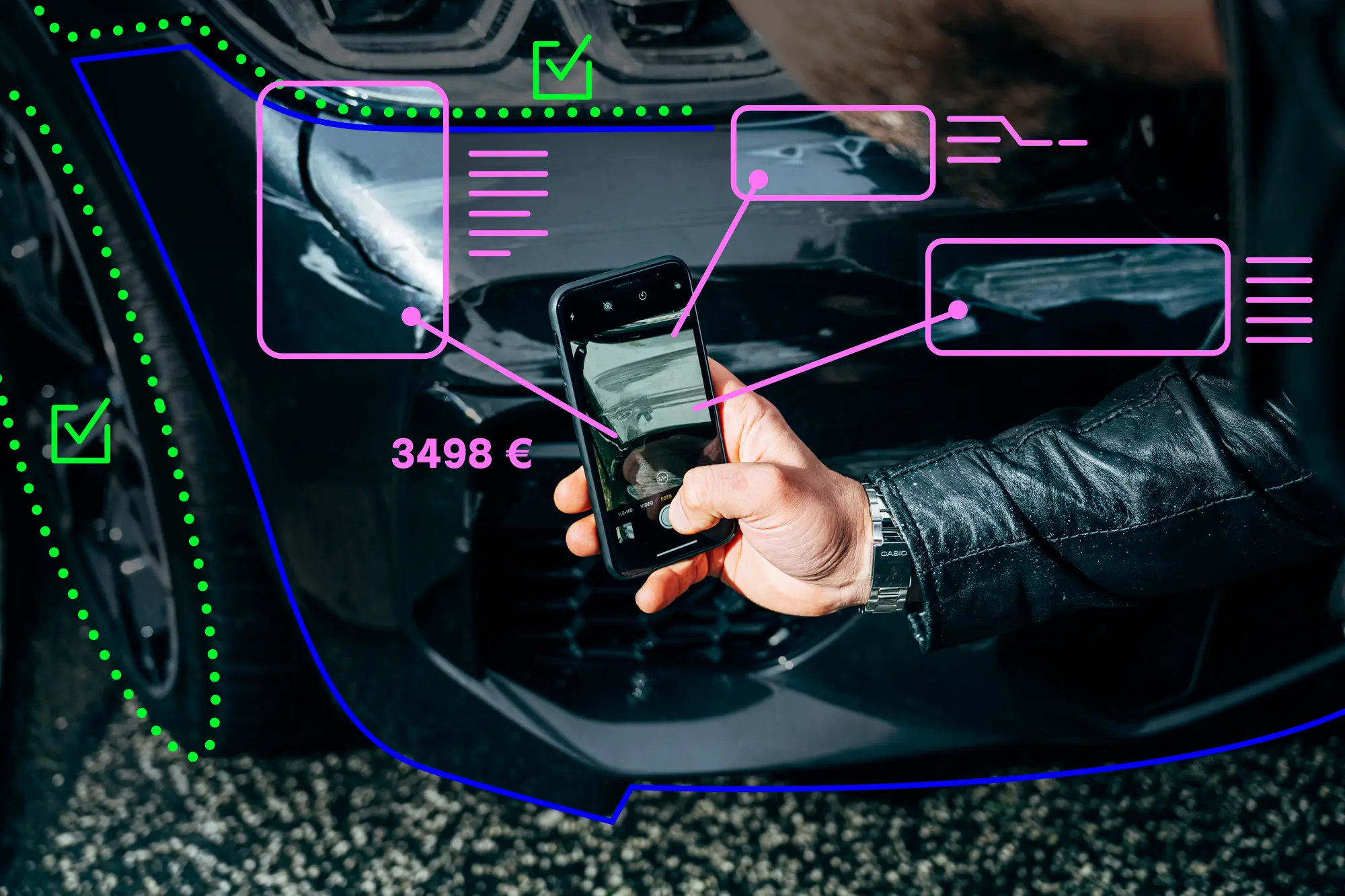

Data science provides the analytical foundation. Deep learning identifies complex relationships. Computer Vision lets systems analyze images and videos; NLP enables them to process language and text.

Machine Learning in Practice

From Dataset to Production-Ready AI Model

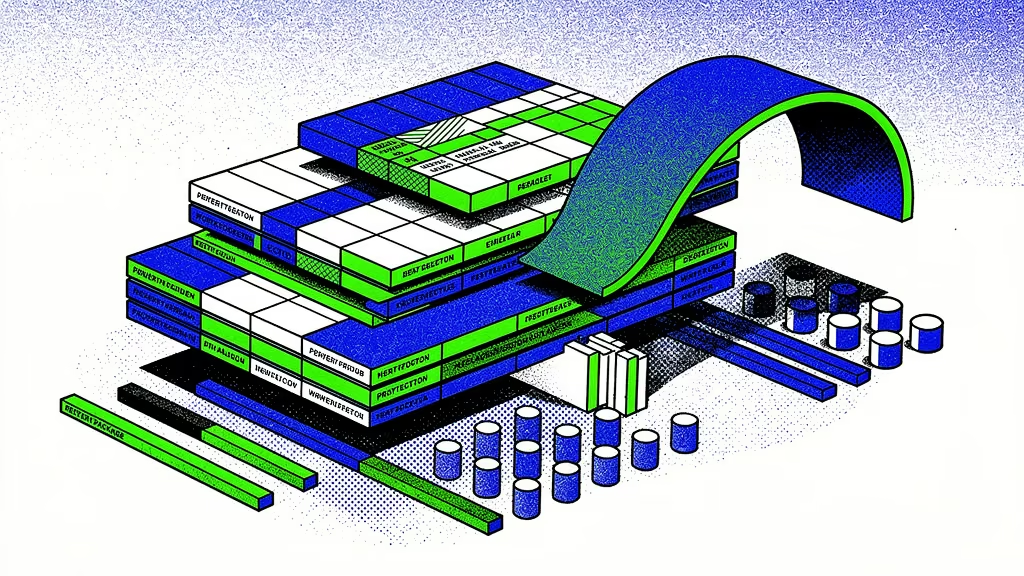

1. Exploratory Data Analysis

We start with an in-depth analysis of your data. We examine data quality, distributions, and relationships, and reflect our findings with internal experts. This makes clear which data points will actually drive the AI model.

2. Data Engineering

We clean faulty values, close gaps, and harmonize data formats. External data sources are added selectively when they demonstrably improve model quality.

3. Feature Engineering

We identify the relevant attributes in your data from which the AI model actually learns during training. The goal is maximum predictive power at minimum complexity.

4. AI Approach

We evaluate multiple mathematical approaches and AI model types suited to your challenge. The model with the best results wins out.

5. Model Training & Validation

We train the AI model on your data and measure its quality against clearly defined metrics. Based on these results, we improve the model in a targeted, iterative way.

6. Proof of Concept

A proof of concept makes the AI model usable early on. Your team tests it in everyday operations, provides feedback, and we incorporate those insights directly into the next iteration.

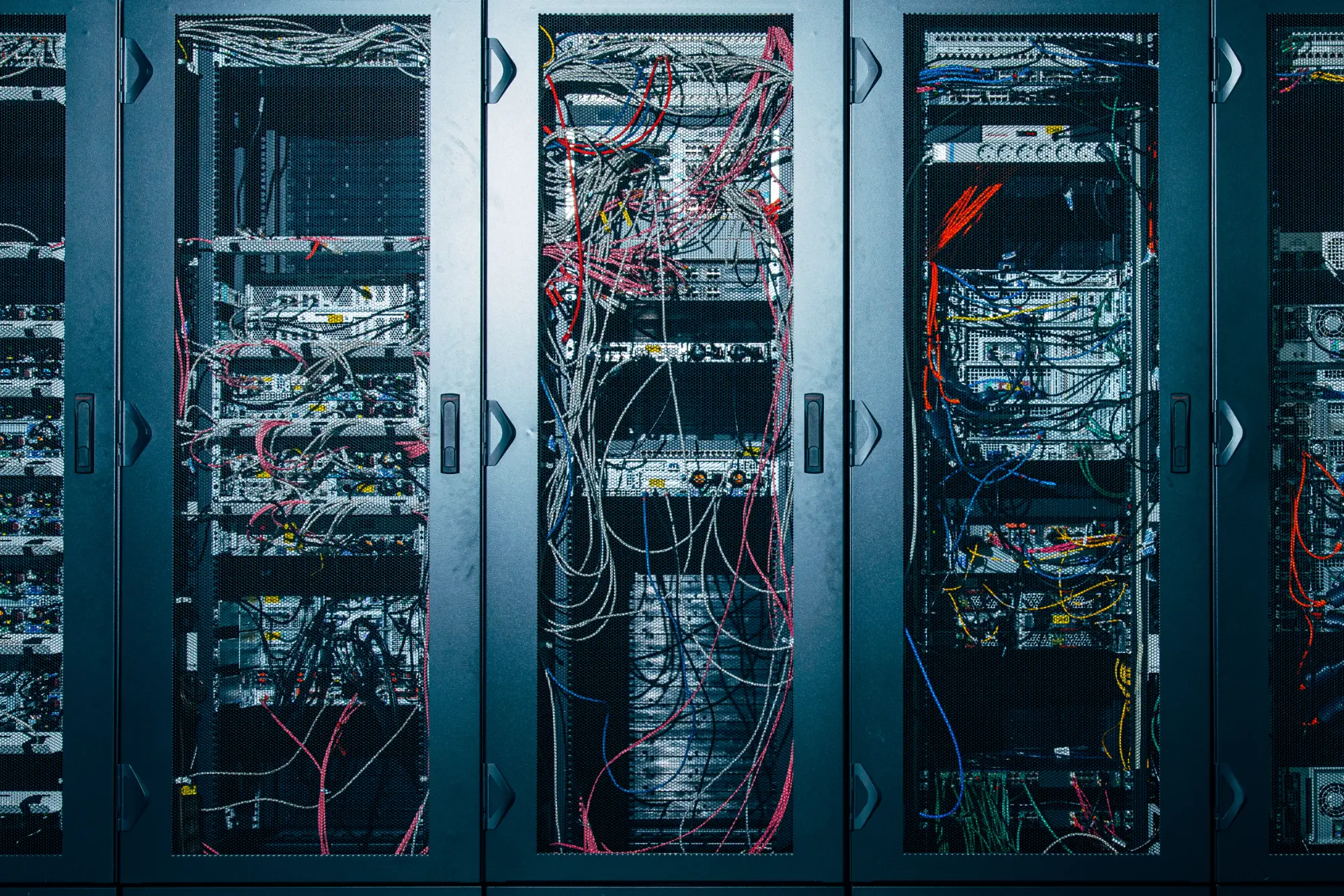

7. Deployment & MLOps

We integrate the AI model into your infrastructure for production use. Monitoring and automated updates ensure the solution remains stable and up to date over time.

KI Plattform

Das Betriebssystem

für Ihre KI-Agenten

AI Compliance

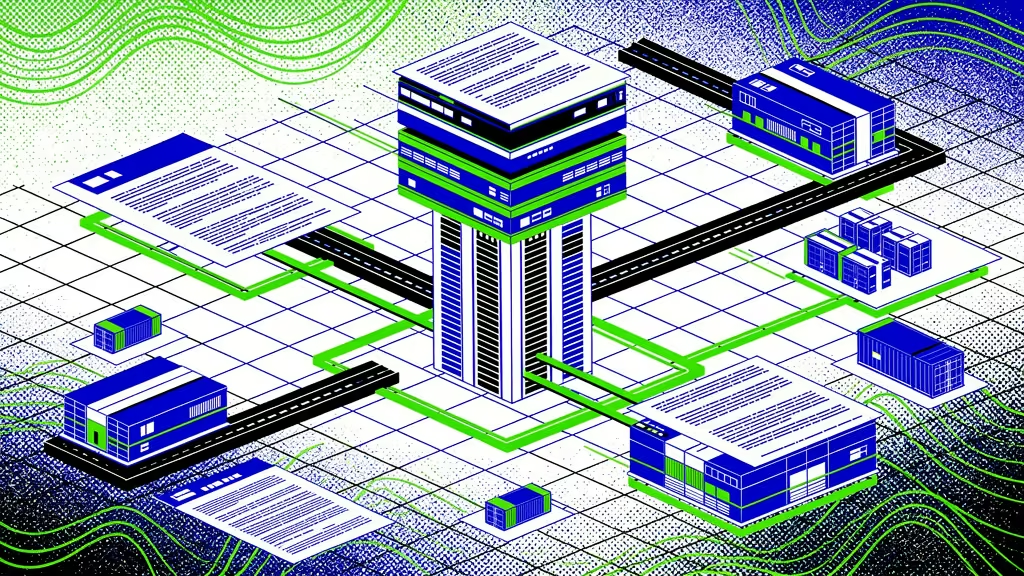

IT Security, GDPR, and EU AI Act — Covered

We develop, operate, and support AI in Germany in accordance with ISO 27001. Encryption, anonymization, clear architecture, and auditable documentation ensure that data protection, IT security, and regulatory requirements are met.

Cloud or On-Premises?

Your Choice.

AWS

Azure

OnPrem

From Pilot to Production

Our project formats bring machine learning into implementation quickly, with calculable effort and clear milestones.

Questions & Answers

AI agents are software-based systems that autonomously perform tasks using a language model. They receive a goal and context, plan the necessary steps themselves, and use tools, data sources, and interfaces along the way.

Unlike chatbots, which react to inputs, agents act proactively: they execute actions, update systems, and make situational decisions. An agent combines a language model with access to data, tools, and planning capabilities.

Agentic AI describes AI systems that autonomously pursue goals, plan steps, and execute actions. Unlike traditional language models that react to inputs, agentic AI systems act proactively: they break down complex tasks into subtasks, use tools, and dynamically adapt their plan to new information.

The term encompasses both individual agents and multi-agent systems.

RPA automates fixed workflows: click A, input B, check C. The process must be entirely predictable. An AI agent, on the other hand, plans its own solution path. It interprets content, reacts to unexpected situations, and uses tools flexibly.

RPA works like a macro, an agent like a case worker. In practice, both complement each other: agents can call RPA bots as a tool when a substep is rule-based.

Agents make sense when there is no clear solution path, when input is highly variable, or when the task requires interpretation and flexible planning. Typical characteristics: unstructured documents, changing requirements, decisions that need contextual knowledge.

If a process can be fully mapped through rules, classical automation is more efficient. Agents step in where rule-based systems reach their limits.

AI agents work exclusively in digital systems. They require clean interfaces, clear process definitions, and sufficient data quality. Missing structure, inconsistent data, or manual media breaks cannot be compensated by agents.

Interfaces must also be agent-compatible: clearly structured, use-case-based APIs with unambiguous actions and consistent responses.

That depends on the operating model. With Human in the Loop, the agent analyzes and suggests, while the human decides. With Human on the Loop, the agent works independently but is monitored via KPIs and spot checks. With Human out of the Loop, the agent operates fully autonomously, with control at system level.

Which model fits is determined by the scope of decisions, regulatory requirements, and fault tolerance.

A multi-agent system consists of multiple specialized agents that work together. Each agent takes on a clearly defined role: one researches, one reviews, one summarizes, one escalates. Coordination happens through orchestration or direct communication between agents.

Multi-agent systems make sense when a task is too complex for a single agent, when different domains are involved, or when parallel processing is needed.

Yes. AI agents connect to existing systems via APIs, webhooks, or protocols like MCP: ERP, CRM, DMS, ticketing, email, calendar systems. Integration is done through standardized interfaces or custom connectors.

An agent can read data from SAP, create tickets in Jira, send emails in Outlook, or store documents in SharePoint. Prerequisite: the systems must be accessible via interfaces.

RAG stands for Retrieval-Augmented Generation. The principle: before a language model responds, relevant information is retrieved from a knowledge base and provided as context. This allows an agent to access internal company knowledge without the model itself being trained on it.

RAG is the foundation that enables agents to work with current, company-specific data: policies, product catalogs, contract content, process documentation.

MCP is an open standard that defines how agents access external tools and data sources. Instead of building a custom interface for each integration, MCP describes available tools in a unified format.

The agent automatically understands which capabilities are available and how to use them. MCP reduces integration effort and makes agents modularly extensible.

Context Engineering determines which information an agent receives at which point in time. An agent is only as good as its context: which documents does it see? Which system data flows in? Which instructions apply?

Context Engineering is the deliberate design of this information space. It determines response quality, hallucination rate, and decision-making capability. Unlike Prompt Engineering, it is not about a single input but about the agent's entire information model.

Memory is the persisted knowledge that an agent builds up over the course of its work. It remembers not just conversation histories but process patterns, exceptions, and implicit company knowledge.

An agent with Memory knows after three months which requests need to be escalated, which phrasings work with customers, and what the exception to rule X looks like. This knowledge makes the agent better over time and is the real value driver.

Security is an architecture question, not a feature. An agent needs its own identity with clear permissions: which systems may it access? Which actions may it perform? The principle of least privilege is mandatory.

Agents may only possess the rights that the respective user has, and only see data that user has access to. Additionally, agents must be protected against prompt injection, manipulated inputs, and uncontrolled action chains.

Prompt injection is the attempt to alter an agent's behavior through manipulated inputs. Countermeasures include strict separation of system prompts and user inputs, input validation, output filtering, and sandboxing of critical actions.

Additionally, monitoring and anomaly detection help identify unusual behavior early. In security-critical contexts, a second verification layer validates agent decisions before execution.

A productive agent must be designed to be fault-tolerant. Critical actions are checked before execution, reversible actions are preferred. Every decision path is logged and traceable. When uncertain, the agent escalates to a human.

Monitoring detects deviations in real time. What matters is not whether an agent ever makes mistakes, but whether its error behavior is controlled, transparent, and containable.

The range is wide. A first agent based on an existing platform like Galilea can be set up in minutes or realized directly as part of ongoing support as a Galilea customer.

A production-ready agent with security concept, RAG integration, system connectivity, and monitoring requires a more comprehensive project. Costs depend on complexity, integration depth, and operating model. We offer various entry formats: from the AI Pilot as proof of concept to the 100-Day MVP for productive deployment.

Through clearly defined KPIs that are set before launch. Typical metrics: throughput time per case, automation rate, error rate, escalation rate, cost per transaction, user satisfaction.

Additionally, we measure model performance: response quality, hallucination rate, latency. What matters is the comparison with the manual process. An agent does not need to be perfect — it needs to be better than the status quo and improve in a controlled manner.

Because we don't just advise — we build. Hundreds of AI projects, our own enterprise platform, a team of developers and architects that delivers in days. We know the architecture decisions, the security requirements, and the pitfalls in operation.

Galilea as our own platform means: no stitching together third-party services, but an end-to-end environment for development, operation, and continuous improvement. From the first agent to a multi-agent system — all from one source.

Ready when you are

Zukunft beginnt, wenn menschliche Intelligenz künstliche Intelligenz entwickelt. Der erste Schritt ist nur ein Klick.

Since 2017, we have been building AI systems that transform businesses. Let's talk about yours.